Last week Microsoft presented their implementation of a multi-touch screen. They decided to create it in the shape of a coffee table.

Examples of applications can be found in the following video:

More movies are available on the official page of MS Surface.

Interesting part of this technology is the way of illuminating the screen and capturing fingertips or objects. Instead of doing it with FTIR technology MS approach uses 4 IR camera’s, a IR illuminator, a diffuser and obviously a projector. Inside the table the IR illuminator will shine on the diffuser and screen. When touching occurs or when objects are placed on the table, the IR light will reflect into one of the 4 camera’s. Using software processing techniques the table is now able to process multi-touch input.

After seeing the video’s of Microsoft I and some people of the Scientific Visualization and Virtual Reality group figured that we could try it out on our current FTIR setup.

By disabling the IR LED’s on the sides of the screen and illuminating the screen with an external IR illuminator we managed to get it working. However the results where a bit disappointing, the contrast was not high enough.

Today I had a small chat with David Wallin, the author of touchlib. I told him about the poor results I had with the current touchlib and setup, even with the version which he has used for what he calls diffused illumination.

Fortunately he had just submitted a new revision (40) to subversion, which contained improved filters. By fiddling a bit with the settings it was now possible to track blobs of the video capture from rear illumination.

Rear illumination experiment:

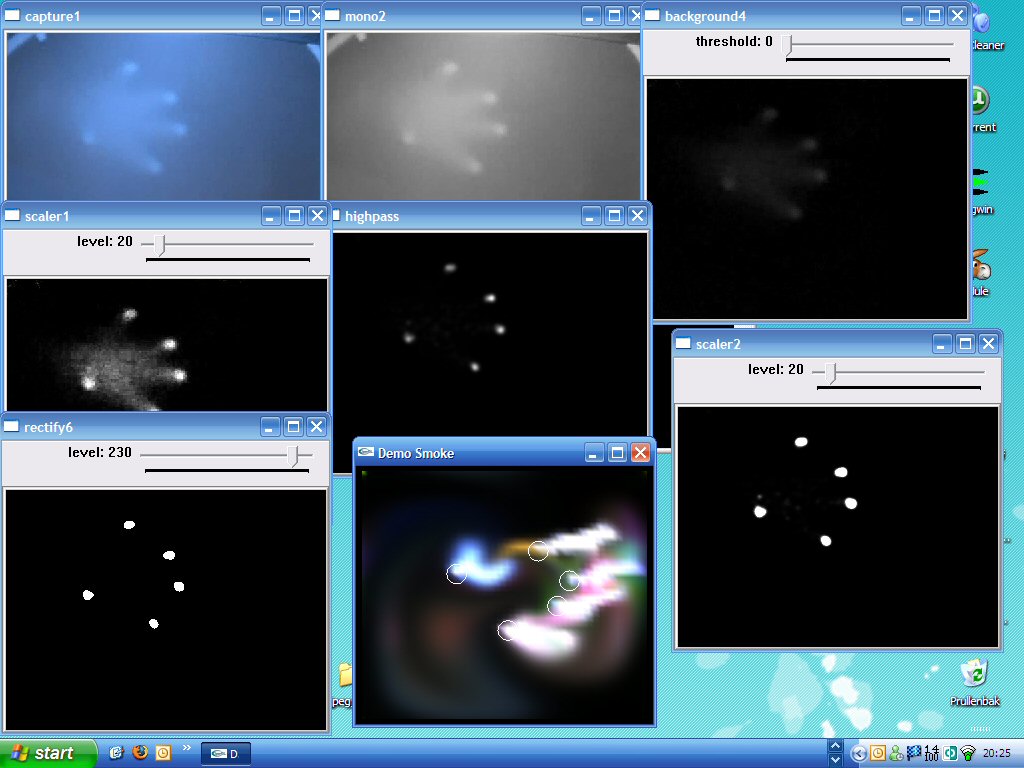

An image of the used the filters:

The image shows the following windows:

1. Capture1

This is the video input window (in this case recorded video input).

2. Mono2

The first filter turns the source image into a greyscale image.

3. Background4

This filter substracts the background from the current scene. Now only the interesting parts (fingertips) should be visible.

4. Scaler1

This filter will amplify the output of Background4 a bit.

5. Highpass

Only bright spots will be given to its output channel.

6. Scaler2

Again, amplify the output of the previous filter.

7. Rectify6

True black and white, as preperation for blob detection in touchlib core.

Another interesting feature will be fiducial detection which will be added (soon) to the touchlib library. This would make it possible to either use markers to manipulate your desktop or even objects/devices.

* update (11-06-2007) *

Two examples objects (a cellular phone and a coffee cup) placed on the surface while using rear illumination:

One response

Hey, i noticed you originally had FTIR and then disabled the LEDS and switched to DI, did you ever try both? i want the option to detect the fiducial but have the FTIR for more consistent touch results in different lighting conditions… advice?